Documentation Index

Fetch the complete documentation index at: https://docs.enconvo.ai/llms.txt

Use this file to discover all available pages before exploring further.

Overview

Hermes Agent is an open-source AI agent from Nous Research. It runs as a local or server-hosted agent runtime with tools, memory, skills, terminal access, and messaging integrations. In EnConvo, Hermes Agent is used through its OpenAI-compatible API server. After the Hermes gateway is running, EnConvo can use Hermes like any other AI model in chat, EnConvo agents, and model-powered features.What You Need

- Hermes Agent already installed and configured

- Hermes gateway running with the API server enabled

- The API server token from

API_SERVER_KEYin~/.hermes/.env - EnConvo with the Hermes Agent AI model provider enabled

/v1/models and /v1/chat/completions. EnConvo’s Hermes provider uses the Chat Completions API.

Get Base URL and API Key

Use this base URL when EnConvo runs on the same Mac as Hermes:~/.hermes/.env:

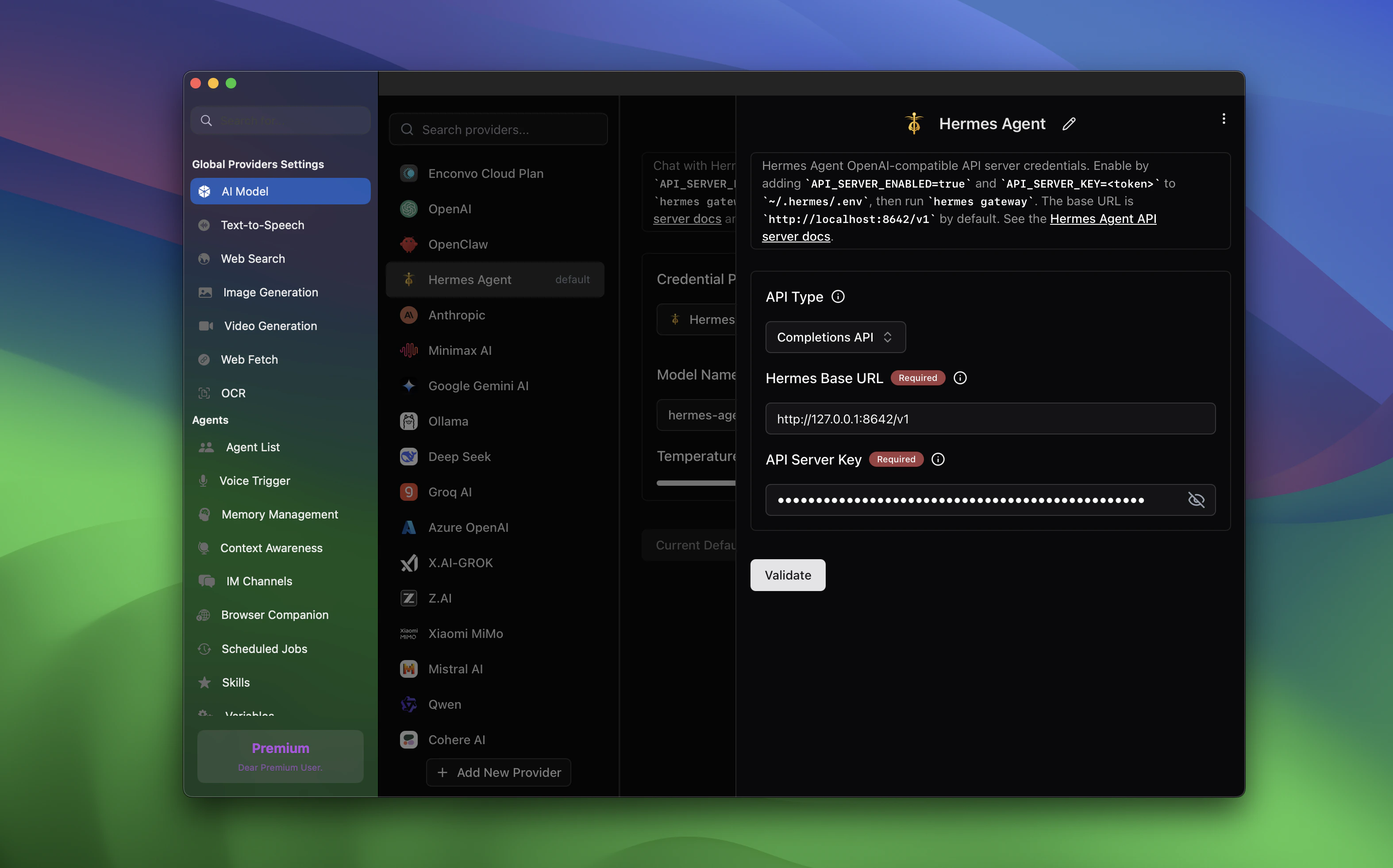

Configure Hermes in EnConvo

Open EnConvo Settings -> AI Model -> Hermes Agent, then configure the credential provider.

| Setting | Value |

|---|---|

| Hermes Base URL | http://127.0.0.1:8642/v1 |

| API Server Key | API_SERVER_KEY from ~/.hermes/.env |

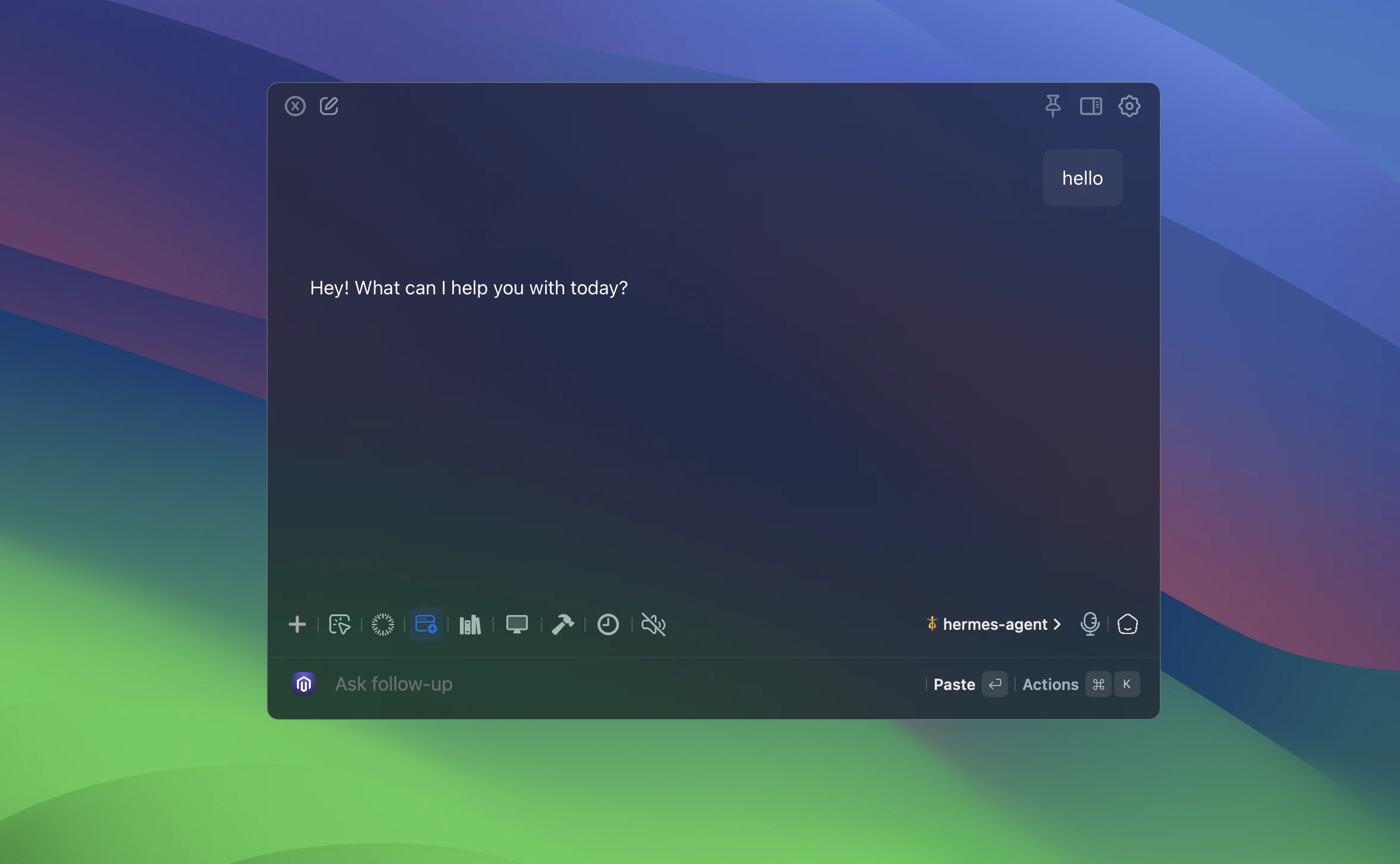

Use Hermes as an AI Model

Once configured, Hermes appears in the model selector. Choose Hermes Agent and select the advertised model, usuallyhermes-agent.

Open the model picker

In chat or another model-powered EnConvo feature, click the current model name.

EnConvo loads Hermes models from the Hermes

/v1/models endpoint. If Hermes is not running or the endpoint is unavailable, EnConvo falls back to hermes-agent.Create an EnConvo Agent for Hermes

You can also create an EnConvo agent that coordinates Hermes Agent. This is useful when you want an EnConvo-facing workflow, tool set, or prompt around Hermes’ agent runtime.Write coordinator instructions

Give the EnConvo agent instructions that explain how it should work with Hermes.

LAN Access

If Hermes is running on another machine, use the host machine’s LAN IP in EnConvo:Troubleshooting

Hermes Agent does not appear in the model picker

Hermes Agent does not appear in the model picker

Make sure the Hermes provider is enabled in Settings -> AI Model. If the model list is empty, confirm the gateway is running with

hermes gateway status.Validation fails with unauthorized

Validation fails with unauthorized

Connection fails

Connection fails

Confirm the API server is healthy with

curl http://127.0.0.1:8642/v1/health. If EnConvo runs on another device, use the host’s LAN IP and make sure Hermes is bound for network access.Wrong base URL

Wrong base URL

Enter

http://<gateway-host>:8642/v1. Do not include /chat/completions in the EnConvo base URL field.Need gateway logs

Need gateway logs

Read Hermes gateway logs with

tail -f ~/.hermes/logs/gateway.log and errors with tail -f ~/.hermes/logs/gateway.error.log.